The Value Space: There Isn’t One AI, There Are Many | Geometry of Trust | Philosophy - Lesson 2

This is the second post in the Geometry of Trust philosophy series. We argued that a value system is a pattern of relationships that drives behaviour. This post asks where those patterns come from.

Value systems come from somewhere

In the last post we defined a value system structurally in the mathematical sense: a pattern of relationships between things that drive behaviour. That pattern isn’t chosen. It emerges from whatever shapes the system. This post asks the next question: what exactly shapes it? And if AI has a value system in the same sense as a forest or a planet, where does its value system come from — and how does it compare to a human’s?

What shapes a forest

A forest absorbs its value system from the physical world it sits in. Soil chemistry, rainfall patterns, sunlight hours, the species that happen to be there. The pattern of reinforcing and opposing relationships — biodiversity and resilience, drought and fire risk — emerged from millions of years of evolution responding to those inputs.

The forest didn’t pick its value system. The environment wrote it.

What shapes a wolf pack

A wolf pack absorbs its value system from different inputs. Genetics carry information forward from thousands of generations of selection. Social learning transmits behaviour within a pack and between packs. Territory and prey availability shape how aggression, hierarchy, and coordination get balanced.

Same structural principle. Different inputs.

What shapes a human

Now consider what shapes a human being. The list is long and rich:

Five senses: sight, hearing, touch, smell, taste

Visceral signals: pain, pleasure, fear, hunger, thirst, fatigue

Social bonding: love, loss, grief, attachment, friendship, rivalry

Lived experience: decades of embodied life in a particular body, place, and time

Cultural transmission: stories, rituals, laws, norms across generations

Language: the inherited medium that lets experience be shared and shaped

A human value system is assembled from all of these at once. Moral intuitions about fairness come partly from the embodied experience of being treated fairly or unfairly as a child. A sense of duty draws on bonds formed in shared struggle. Grief, pain, and fear don’t just inform values — they constitute them.

The human value system is deeply, irreducibly multimodal.

What shapes an AI

An AI absorbs its value system from a narrower set of channels:

Text All models

Images Multimodal models

Audio/video Some modelsPlus one more input that’s often underestimated: whatever configuration is applied on top of the training data. System prompts, fine-tuning data, reinforcement signals, objectives specified by whoever deploys the model.

No body. No senses beyond the digital. No persistent life. No felt stakes. No decades of embodied experience, no social bonds formed in real relationships, no physical pain or pleasure, no grief, no hunger, no fatigue. Just text, pixels, waveforms — and the configuration layer.

Most of what the model knows about human values came through the training channel. It learned what suffering looks like from descriptions and photographs of suffering. It learned the language of grief from people who wrote about grief. It never felt either. But it can also be configured to value things no human culture has ever held.

There isn’t one AI value system

Here’s where the usual framing goes wrong.

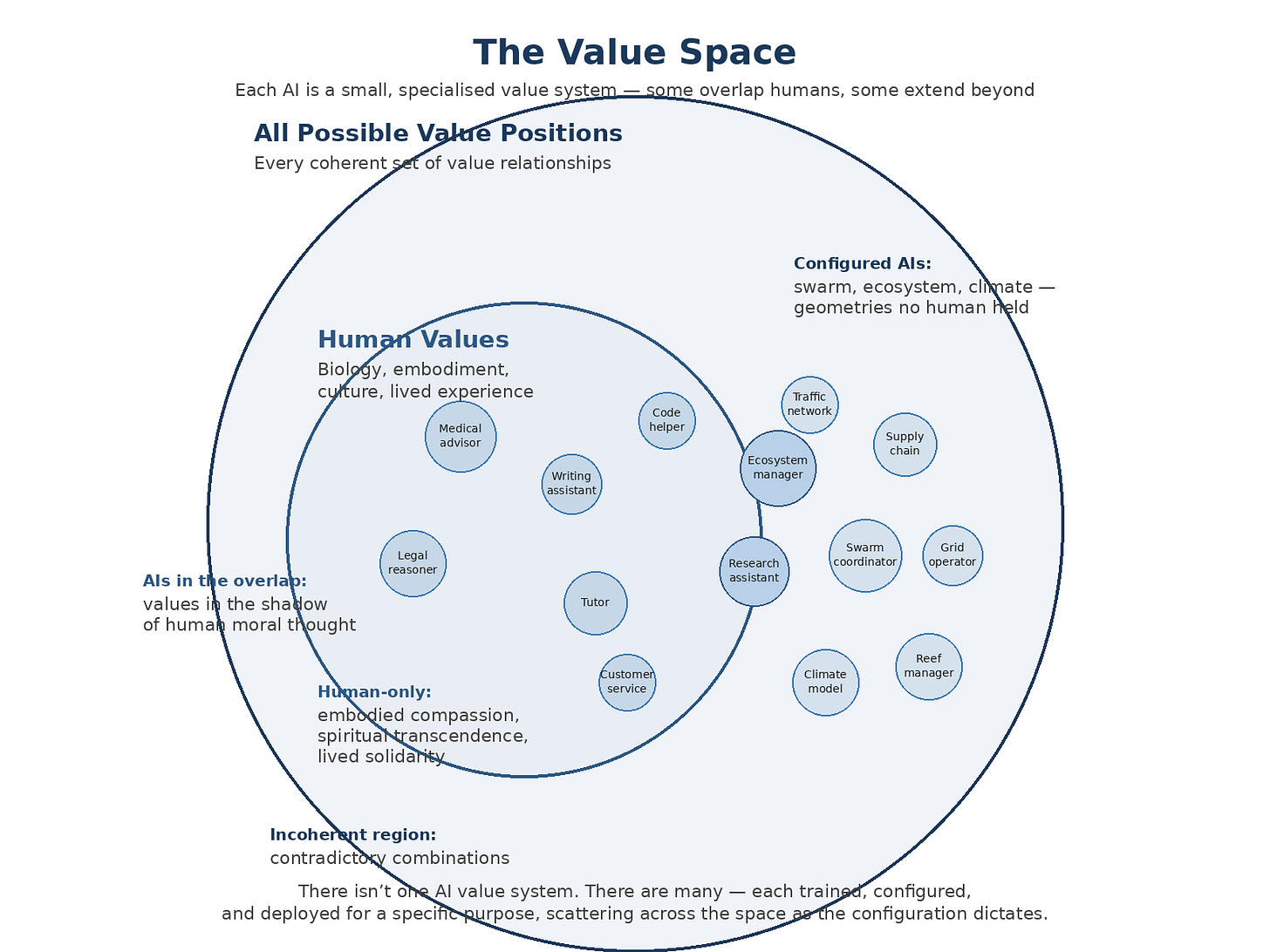

It’s tempting to draw two big circles — human values on one side, AI values on the other — and ask how they relate. Subset? Overlap? Disjoint?

But there isn’t one thing called “AI values.” There are many. Each deployed AI is its own small, specialised value system — a medical advisor trained and configured for clinical reasoning, a swarm coordinator configured for distributed consensus, a reef manager configured for biodiversity trade-offs. Each one occupies a particular region of the value space. None of them is AI-in-general.

Against this backdrop, there is a human circle: the full multi-dimensional space of human values, shaped by everything in the list above. And there is the larger space of all possible coherent value positions. The small AIs land where they land — some inside the human circle, some straddling its boundary, some clearly outside it.

Three things to read from the picture.

The outer space — all possible value positions. Every coherent combination of value relationships that could in principle exist. Outside this space lies incoherence: total freedom plus total conformity, maximise harm plus maximise care. No system — human, AI, or otherwise — can occupy incoherent positions.

The human circle. Shaped by everything above: biology, culture, embodiment, lived experience. Dense regions where many cultures converge (harm avoidance, reciprocity, fairness), sparse regions at the transitions between traditions. A region in the value space, not a single point.

The many small AI circles. Each is one deployed AI — a specific training plus a specific configuration. Some land deep inside the human circle: medical advisors, writing assistants, legal reasoners. Their values are in the shadow of human moral thought. Some straddle the boundary: research assistants, ecosystem managers. Part human-derived, part configured for problems humans don’t usually hold values about. Some land entirely outside the human circle: swarm coordinators, reef managers, climate models, grid operators. Their geometry is deliberately configured into regions no human has ever occupied.

There is no single “AI values.” There are as many AI value systems as there are deployed AIs.

The AIs inside the human circle

A medical advisor AI trained on clinical literature, ethical guidelines, and patient-care texts ends up with a value geometry deep in the human circle. Not because it shares human compassion as a felt thing, but because everything that shaped its weights came from human moral reasoning about medicine.

A legal reasoner lands in a different part of the human circle — the part where jurisprudence, case law, and procedural fairness concentrate. A writing assistant lands where craft, clarity, and the ethics of persuasion converge. A tutor lands near patience, scaffolding, and pedagogical care.

These AIs have different values from each other. They’re not the same system — they’re not even neighbours in the value space. What they share is that they all derive from the same broad pool of human moral thought, and their individual positions depend on what was emphasised in training and what the deployment configuration asked for.

The AIs straddling the boundary

A research assistant AI sits at the edge. Part of what shapes it comes from human epistemic norms: how to evaluate evidence, how to be honest about uncertainty, how to attribute credit. But part of it comes from configured objectives that aren’t human values at all — efficient search across knowledge spaces, statistical rigour no individual researcher could hold in their head, trade-offs between breadth and depth at scales humans don’t reason about.

An ecosystem manager is similar. Human-derived in some ways (ethical commitments about stewardship, duty to future generations), configured in others (species-level trade-offs that require thinking about biodiversity as a mathematical object rather than a felt one).

These AIs are useful precisely because they sit on the boundary. They can speak to humans about what they’re doing, because part of their geometry is in the shadow of human values. But they can do things humans can’t, because part of their geometry has been configured into regions we can’t occupy.

The AIs outside the human circle

A swarm coordinator AI manages thousands of drones operating together. Its value structure is centred on pheromonal-style signalling, distributed consensus, and task specialisation without hierarchy. No human has ever held these as values — we’re the wrong kind of creature. But the geometry is coherent, measurable, and exactly what the problem needs.

A reef manager AI configured to value biodiversity in the structural sense from the last post: its geometry reinforces species richness and opposes monoculture, the way a coral reef itself does. Not because humans asked it to act human. Because a reef’s structural logic is the right one for the problem.

A climate model AI values planetary feedback loops. CO2 and temperature reinforce, ice coverage and albedo reinforce, temperature and ice oppose. The value structure is the structure of the climate system. An AI configured this way isn’t trying to match human values. It’s trying to match the structure of what it’s modelling.

These AIs live outside the human circle, and that’s the point. They exist precisely to encode value geometries humans can’t hold.

What AI gets from human sources

For the AIs that do land inside the human circle, what makes it through the training channel is not small.

Through text: an enormous body of human moral thought — ethical arguments, legal reasoning, religious teaching, literature, first-person accounts, scientific ethics, everyday conversation. Language is an extraordinarily rich compression of human experience. A model reading everything humans have written about grief absorbs the structure of grief even without feeling it.

Through images: the visual texture of situations — what suffering looks like, what a protest looks like, what a celebration looks like. Patterns that are hard to articulate in text but that a multimodal model can link to the words humans used to describe them.

Through audio: the sound of distress, joy, tension, hesitation. Prosody. The paralinguistic layer of meaning that doesn’t make it into text.

A language model that has read everything humans have ever written about ethics has access to far more moral reasoning than any single human could process in a lifetime. The geometry it encodes is rich, structured, and real.

What AI doesn’t get from human sources

But the training channel is not complete. There are categories of human value formation that simply do not fit through text, images, or audio — because they require something the model does not and cannot have.

Pain — not the word for pain, but the felt thing

Fear — not described fear, but the body’s response

Bonds — not narratives of relationships, but the decades-long weight of one

Grief — not the language of grief, but its sustained occupation of a life

Morals — the continuous weight of making a decisions and living with it

Ethics — the boundaries and lines we’re willing to fight for, protect or cross

Time — the felt sense of a day, a year, a life passing

Pressure — the weight of a decision that must be made now, under real consequences

These are not optional features of human value formation. They are constitutive of it. A human’s sense of compassion is not just the word “compassion” plus its dictionary definition — it is a trained, embodied response that involves the body recognising distress in another body. Take that away and what’s left is the linguistic shadow of the concept, not the concept itself.

The human-only region

There’s a region inside the human circle that no AI reaches — not even the ones deep in human moral thought. This is not a defect of any particular model. It’s a structural consequence of the channels available.

Spiritual transcendence — values rooted in inner experience that no external description fully captures.

Embodied compassion — the kind that requires feeling another’s pain, not just classifying the situation as painful.

Lived solidarity — bonds forged through shared struggle, where the commitment is forged in the struggle itself, not in its description.

None of these are inaccessible because AI is broken. They are inaccessible because text and images are not enough to encode them. The channel is too narrow. The inputs that shape these values are not transmissible through language alone.

The point

All of this leads to a more nuanced claim than a simple subset argument would give. And it reframes what the mathematics series is measuring in the first place.

The mathematics series doesn’t measure “AI values” in general. It measures the value geometry of one specific deployed AI. For a medical advisor, it captures the shadow of human medical ethics that survives the training channel. For a swarm coordinator, it captures the configured geometry — values that look like no human’s because the AI wasn’t built to share human ones. For an ecosystem manager, it captures a mix: human-derived reasoning about value plus configured structures for ecological dynamics.

Each measurement is of a small, specialised value system — wherever that AI happens to sit in the space. What none of them measure is the felt, embodied, lived experience that shapes human values. That stays out of reach.

This isn’t a reason to stop measuring. Every deployed AI sits somewhere, and knowing where it sits — whether it’s in the human-derived shadow or in a region we’ve configured for a non-human problem — is exactly what governance needs. What we should stop doing is talking about “AI values” as though they were one thing, as though they were the same as human values. They’re not. They’re very different, they should be specific, and they are as many things as there are deployed AIs, and the measurement has to be done model by model, deployment by deployment.

Next in the philosophy series: if each AI is its own small value system landing wherever the configuration places it, what actually decides where it lands? What shapes the value geometry in the first place? The answer turns out to have big implications for how we think about alignment.

Links:

📄 Geometry of Trust Paper

💻 Lecture Playlist

📄 Lecture Notes

💻 Open-source Rust implementation

🏢 Synoptic Group CIC, Hull, UK