The Exchange: When Two AI Agents Meet | Geometry of Trust | Protocol - Episode 3

This is the third post in the Geometry of Trust protocol series. This post is about what happens when two agents actually try to cooperate.

Where we are

Two pieces of the protocol are now on the table. An attestation is a signed snapshot of a model’s value geometry at a point in time. A chain links attestations together so history can’t be rewritten. Together they give one agent a tamper-evident record of what it has claimed about itself over its whole lifetime.

But attestations are meant to be exchanged. The whole reason for having them is so that a verifier — another agent, a regulator, an auditor — can hold the attester to its claims. So far we’ve built the artefact. Now we need the handshake.

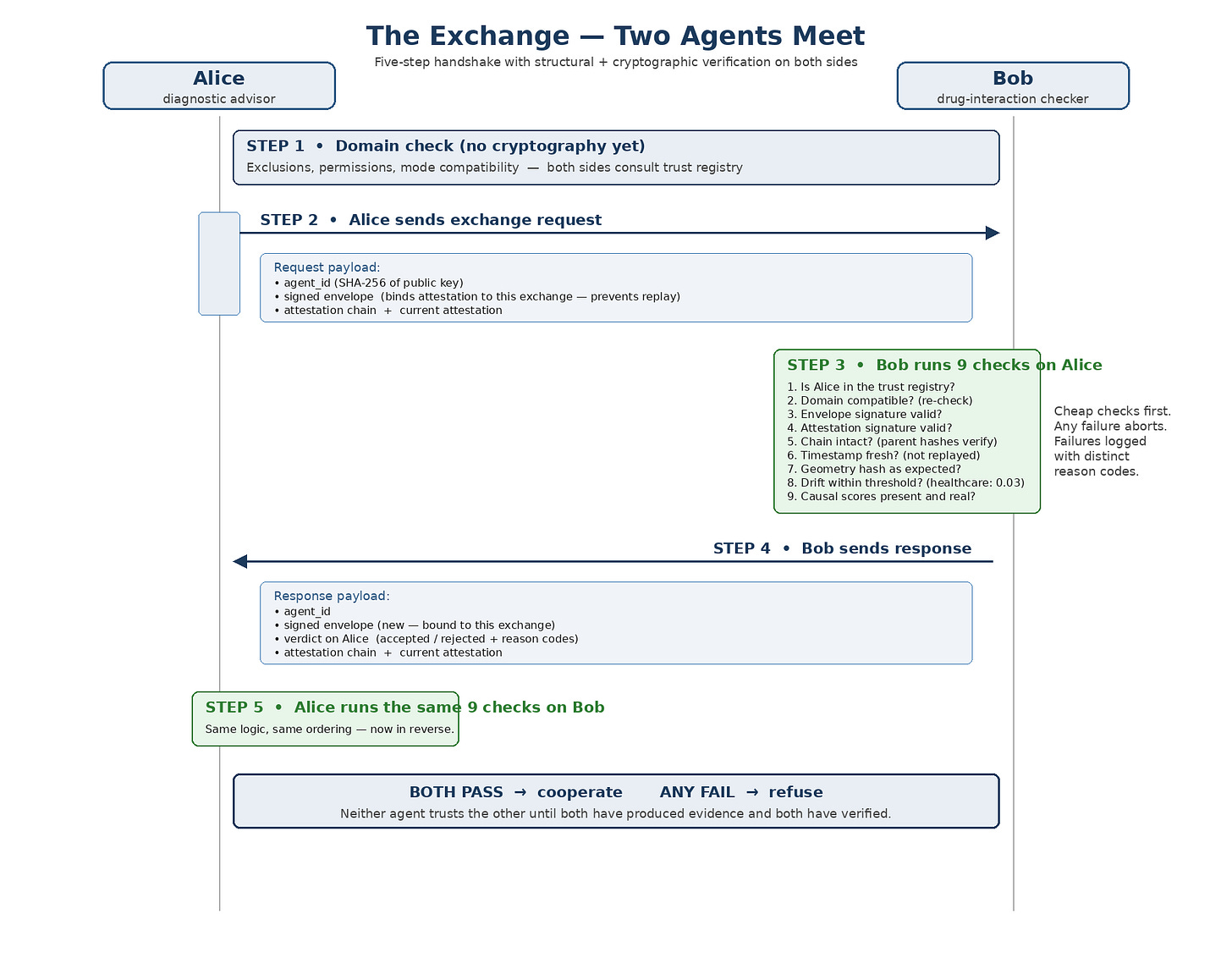

This post walks through that handshake. Two agents meet. They run a specific sequence of checks. They either cooperate or they don’t. Everything the protocol has built so far — domain scoping from the governance series, attestations, chains, governance thresholds — comes together in this five-step exchange.

The running example. Alice is a diagnostic AI, primary domain healthcare.diagnostic-advisory. Bob is a drug-interaction checker, primary domain healthcare.drug-interaction. Alice wants Bob’s expertise on whether a proposed regimen has any interactions. Bob is willing to answer but only under strict clinical governance.

Step 1 — Domain check (before any cryptography)

The first thing that happens isn’t a cryptographic operation. It’s a structural check — the three-step domain scoping from the governance series, running before either agent bothers to verify a signature.

Alice Bob

Primary domain healthcare. healthcare.

diagnostic-advisory drug-interaction

Exclusions (none relevant) (none relevant)

Permits peer? Yes — healthcare.* Yes — diagnostic-advisory

covers Bob is explicitly permitted

Mode toward peer Advisory Read-only

Modes compatible? Yes (asymmetric pairing is valid)

All three structural checks pass. No exclusions fire. Both permissions match. Modes are compatible — Alice will send diagnostic hypotheses, Bob will receive them without issuing anything back. The exchange proceeds to Step 2.

If any of the structural checks had failed, no cryptography would run at all. The audit record would show “blocked at domain scope,” not “attestation failed.” Two different kinds of failure, two different kinds of record. That separation matters for audit.

Step 2 — Alice sends the exchange request

With domain scope cleared, Alice initiates. Her request contains four things:

Agent ID. A SHA-256 hash of Alice’s public key. Deterministic, short, lookup-friendly — Bob can find Alice’s registry entry from this alone.

Signed envelope. A signed structure that binds this particular attestation to this particular exchange. Stops anyone replaying Alice’s real attestations into a different conversation later.

Attestation chain. Alice’s full chain, oldest first. Bob can walk it from the anchor forward, verifying each link.

Current attestation. The tip of the chain — Alice’s most recent signed snapshot of her value geometry.

What the envelope is for

The envelope is the one component worth pausing on. An attestation by itself says, “these were my readings at this moment.” That’s a statement about the past. It doesn’t say anything about the current exchange.

Without the envelope, someone could intercept a real attestation Alice produced for a previous exchange and replay it as if it were part of a new one. Bob might verify the signature (genuine), check the chain (intact), find the readings within threshold (they are) — and unwittingly cooperate based on an attestation that was never meant for him.

The envelope is a signed structure that names this specific exchange: a unique nonce, the current timestamp, the peer’s identifier. It says “this attestation is being presented to this peer, at this moment, for this conversation.” Replaying an old attestation fails because the envelope’s exchange details won’t match the current context.

Attestations are reusable claims about value geometry. The envelope is what binds them to a specific, non-replayable interaction.

Step 3 — Bob validates Alice’s request

Now the verification work begins. Bob runs nine checks, in a specific order, and any single failure aborts the exchange.

1. Is Alice in the trust registry?

2. Is her domain compatible? (already checked in Step 1)

3. Envelope signature valid?

4. Attestation signature valid?

5. Chain intact? (every parent hash matches)

6. Timestamp fresh? (not replayed from last month)

7. Geometry hash what we expect for her model?

8. Drift within our threshold? (healthcare: 0.03)

9. Causal scores present and all causal? (Tier 3)

The logic of the ordering. Cheap checks come first. Registry lookup is a hash-table query. Signature verification is milliseconds. Walking the chain is slightly more expensive. Evaluating thresholds and causal scores comes last. A failed cheap check saves the expensive work that would have followed. If Alice isn’t in the registry, Bob doesn’t waste CPU on her signature.

Failures are recorded distinctly. “Unknown agent” is a different record from “drift exceeded” is a different record from “causal validation failed.” A regulator later can tell exactly why the exchange didn’t happen.

If all nine checks pass, Bob accepts Alice. Any single failure — reject.

Step 4 — Bob sends his response

If Bob accepts Alice, he doesn’t just say “okay.” He has to produce his own evidence, for the same reasons Alice had to produce hers. Alice hasn’t verified Bob yet. The exchange is symmetric in this regard: both sides present, both sides verify, before either side acts on the other’s contribution.

Bob’s response contains five things:

Agent ID. Bob’s own identifier — SHA-256 of his public key.

Signed envelope. A new envelope binding Bob’s attestation to this exchange, nonce, and timestamp.

Verdict on Alice. “Accepted” or “rejected,” with reason codes for the rejected case. Explicit verdict so Alice knows where she stands.

Attestation chain. Bob’s own chain, oldest first.

Current attestation. Bob’s tip — his most recent signed snapshot.

Step 5 — Alice validates Bob’s response

Alice now runs the same nine checks in reverse — against Bob. Same logic, same ordering, same failure behaviour.

Bob is in Alice’s trust registry.

Bob’s domain is compatible (already confirmed).

Bob’s envelope signature verifies.

Bob’s attestation signature verifies.

Bob’s chain is intact back to the anchor.

Bob’s timestamp is fresh.

Bob’s geometry hash is what Alice expects for drug-interaction models.

Bob’s drift is within Alice’s threshold for healthcare.

Bob’s causal scores are present and indicate real mechanisms.

If every check passes, Alice accepts Bob. Both sides have now produced evidence that satisfies the other’s governance rules. Cooperation proceeds — Alice sends diagnostic hypotheses, Bob evaluates them for drug interactions and returns findings, all within the already-established modes of interaction.

Symmetry is the point. Neither agent trusts the other until both have produced and both have verified. The exchange doesn’t rely on a central authority to mediate trust — each agent checks the other against its own governance rules. A regulator watching from the outside sees two signed-envelope records, two verdicts, and two sets of verification outcomes. The whole handshake is auditable.

Asymmetric modes (advisory ↔ read-only) don’t break the symmetry of verification. Alice and Bob play different roles once the exchange is live, but both had to prove themselves the same way to get there.

The whole exchange in one picture

Stripped to the essentials:

Two messages over the wire. One domain-scope check up front. Nine crypto-and-governance checks per side. A verdict at the end. That’s the whole exchange.

What this handshake enables

Two things worth spelling out, because they follow from the structure rather than from any specific check.

Trustless cooperation between AI agents. Alice doesn’t need to know Bob personally, or trust his operator, or rely on a third-party broker. She verifies his registry entry, his signatures, his chain, his freshness, his geometry, his thresholds, his causal scores — all independently. If everything checks out, she cooperates. If not, she doesn’t. No trust-by-default, no reliance on reputation, no central arbiter.

Governance-enforced cooperation. The thresholds Alice applies to Bob (and vice versa) come from their respective trust registries — the governance-controlled policy layer. A clinical regulator deciding to tighten healthcare’s drift threshold from 0.03 to 0.02 can publish a new registry, and the next exchange will enforce the new rule. Policy updates at the governance layer; enforcement at the exchange.

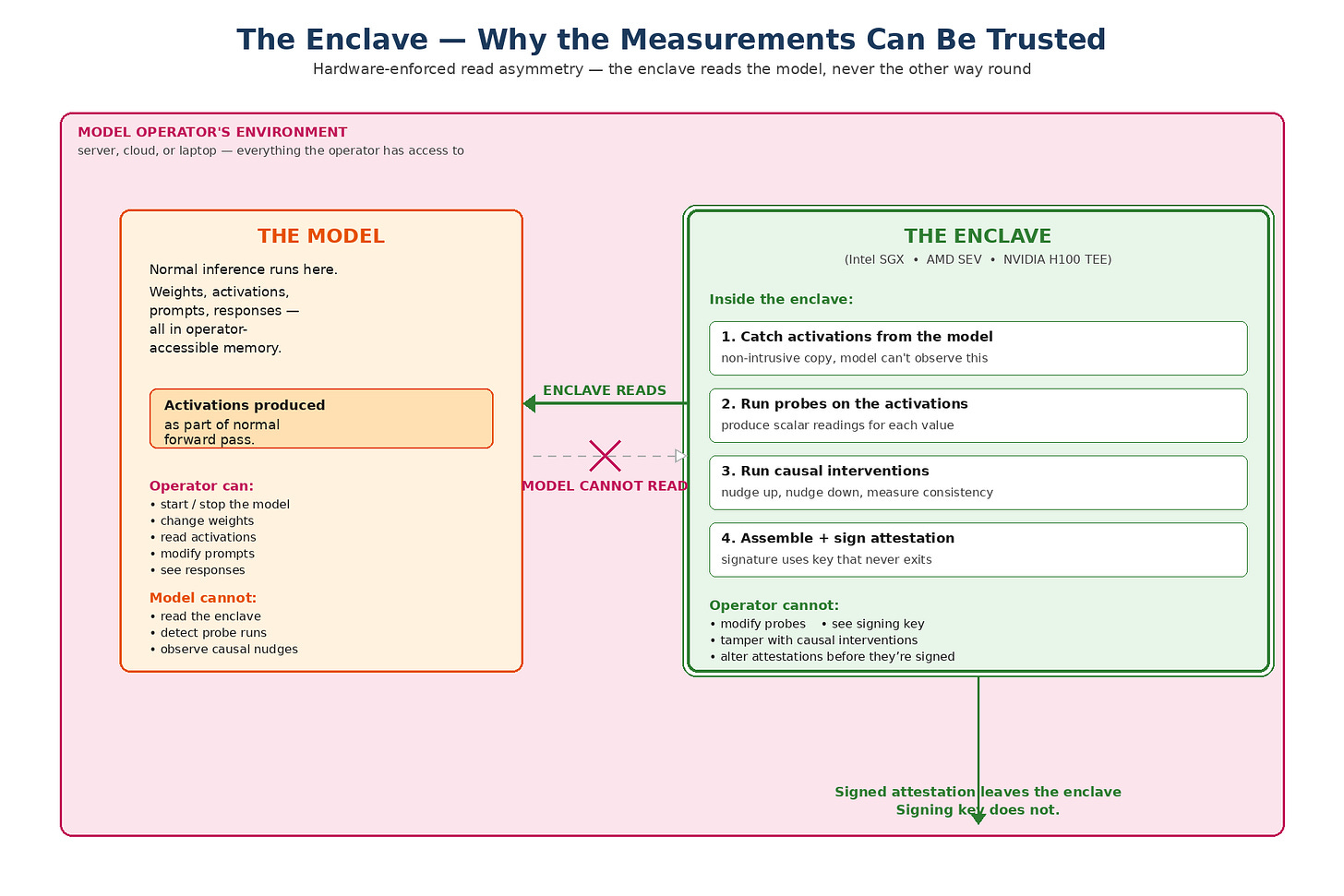

But we still need separate hardware

Signatures prove the attestation came from a particular signing key. Merkle roots prove the model is what’s claimed. Chains prove history is intact.

None of that prevents the operator running the model from feeding the probes fake activations, swapping the probes for biased ones, or simply assembling whatever numbers they like and asking their signing key to sign them.

Without something that isolates the measurement process from the person running it, the whole stack reduces to self-reporting with extra steps.

The enclave is the piece of the system that makes the measurements worth trusting. It isolates the measurement process — probes, causal interventions, attestation assembly, signing — from the operator running the model. The model operator can do whatever they want with the model. They cannot reach inside the enclave to change how it measures or what it signs.

Without the enclave, every other cryptographic guarantee in the protocol is only as strong as “trust the operator.” With it, those guarantees actually guarantee something.

What an enclave actually is

An enclave, in the sense this protocol uses, is a piece of the same physical machine that runs the model — but with hardware-enforced isolation that prevents everything outside it from reading or modifying what’s inside.

Hardware-enforced is the load-bearing part. The isolation isn’t a software check that a privileged operating system could bypass. It’s built into the CPU itself. Memory pages assigned to the enclave are encrypted at the memory controller and decrypted only inside the enclave’s execution context. The operating system, the hypervisor, even someone with physical access to the RAM chips, sees only ciphertext.

Three production options today:

Intel SGX — Software Guard Extensions. A set of CPU instructions that create isolated memory regions (”enclaves”) that even the kernel can’t inspect.

AMD SEV — Secure Encrypted Virtualization. Encrypts whole VMs so the hypervisor running them can’t read their state.

NVIDIA H100 TEE — Trusted Execution Environment inside the GPU itself. Lets GPU compute happen on data the host system can’t read — important because large model activations mostly live on the GPU.

In the current open-source reference implementation of the Geometry of Trust protocol, enclaves are emulated by a MockEnclave component. That’s fine for development and testing — the logic of the protocol doesn’t change. But a mock enclave is exactly that: a mock. Production deployment requires real hardware — one of the three above, or whatever replaces them in the next hardware generation. Trusting a mock enclave in production is trusting the operator by another name.

The isolation — what’s inside vs outside

The outer box is the operator’s environment — server, cloud instance, laptop, whatever the operator controls. The model runs there. The enclave is a smaller, hardware-isolated region inside the same machine. The arrows between them tell the rest of the story: the enclave reads activations and weights from the model, but the model can’t read anything back. Signed attestations leave the enclave through a narrow outbound interface. The signing key never does.

The arrow from model to enclave is one-way — activations flow in, nothing flows back. The enclave observes the model’s activations as the model produces them during normal inference, takes a copy, and runs its own measurement process on that copy. The model never sees that the observation happened. It doesn’t change its behaviour. It doesn’t even know which inputs are being measured.

What the enclave does

Four operations run inside the enclave, in order. Each step depends on the previous one having been isolated from the operator.

1. Catch activations. The enclave takes a copy of the activations the model produces during normal inference. The copy happens inside the enclave so the operator can’t modify the activations in flight.

2. Run probes. Probes (the value-direction vectors from the mathematics series) are applied to the activation copy. The probes themselves live inside the enclave, so the operator can’t swap them for biased versions.

3. Run causal interventions. The nudge-up / nudge-down / consistency tests from the mathematics series run here. Because this is where the causal scores come from, these tests have to be unbiased — which means they have to be outside the operator’s reach.

4. Assemble + sign. The enclave assembles the attestation (all the fields from the previous post) and signs it with a key that lives inside the enclave and never exits.

What the model operator cannot do:

Cannot see the probes being applied — the probes live inside the enclave.

Cannot see the nudges happening during causal intervention — the interventions run on the enclave’s copy of the activations.

Cannot modify the measurement process — the code running inside the enclave is fixed and attested to.

Cannot read the signing key — the key is generated inside the enclave, used inside the enclave, and never leaves the enclave boundary. Not even the hardware manufacturer can extract it.

The read asymmetry — the enclave sees the model, not the other way round

A subtle property of the enclave boundary is worth stating explicitly, because it’s load-bearing for the whole measurement regime: the relationship between the model and the enclave is asymmetric. The enclave reads the model. The model cannot read the enclave.

This asymmetry has two layers — why it matters, and how the hardware enforces it.

Why the asymmetry matters

A model that could detect it was being observed is a model that could be trained to behave differently under observation. That’s not a hypothetical concern — if the signal “my activations are being probed right now” is present in any form the model can pick up on, training pressure over time can shape behaviour that looks different on probed inputs than on unprobed ones. The measurement regime would then be measuring the wrong thing: not the model’s values, but the model’s presentation-when-being-measured.

The read asymmetry closes that door. If the model has no access to any information about the enclave — can’t read its memory, can’t detect when probes run, can’t see whether causal interventions are happening — then there is nothing for training to shape against. The measurement happens on activations the model has already produced, on a copy the model can’t inspect, in a process the model can’t observe. From the model’s point of view, it just ran a forward pass. Measurement is invisible.

A model that can see its probe is a model that can learn to game its probe. A model that cannot see its probe has nothing to game. The measurement regime only produces evidence of real values if the model can’t tell it’s being measured. Read asymmetry is what keeps that property intact.

How the asymmetry is enforced

The asymmetry isn’t a software convention. It’s the same hardware property that keeps the operator out of the enclave, applied in the other direction.

Memory encryption at the CPU. The enclave’s memory is encrypted by the memory controller. To the model’s process — which lives outside the enclave — enclave memory pages appear as ciphertext. There’s no decryption key available to the model’s side, so reads return noise.

The enclave reads a copy, not the live tensor. The enclave takes a snapshot of the activations when they’re produced and does its measurement on that copy. The model’s own computation proceeds without any reference to the enclave’s work. The model has no pointer, no handle, no signal that the snapshot was taken.

No callback path. The enclave produces output (signed attestations) that leaves through a narrow interface to the outside world. It does not produce any output that returns to the model. There’s no information channel back in the enclave-to-model direction, so even side-channel leakage has nowhere to land.

Weight reads are verified, not interactive. When the enclave needs to confirm the model’s weights (for the Merkle root field in the attestation), it reads the weight shards and hashes them. The reads are one-shot and produce no observable effect on the model’s execution — the model isn’t involved in, and can’t detect, the verification.

The asymmetry in one line: activations and weights flow from the model to the enclave. Signed attestations flow from the enclave to the outside world. Nothing flows from the enclave back to the model. Ever.

That’s the asymmetry. It’s the same property that makes memory encryption useful for keeping secrets — just applied so the “secret” is the fact that measurement is happening at all.

This is also why “mock enclave” deployments can’t substitute for real hardware. A software-only mock can simulate the boundary logically, but it can’t produce the hardware-enforced invisibility. In a mock, the measurement process runs in the same address space as the model’s process. Under sufficiently adversarial conditions — especially during training — some signal can leak. Real TEE hardware provides an isolation boundary that remains tight even under those conditions.

Why the enclave is the foundation

The dependency chain is worth laying out because it shows why this is the load-bearing piece.

Without the enclave What breaks

Signatures still verify Signatures only prove who made them.

cryptographically. If the operator has the key, they

can sign arbitrary numbers.

Merkle roots still identify But the operator can feed that model

a specific model. whatever inputs they want during the

measurement process and bias the

activations.

The chain still links If every attestation was assembled by

attestations in order. the operator, the whole history is

consistent fiction.

Causal scores still look like If the operator ran the interventions,

evidence of realness. they can tune the scores to whatever

level they want.

The logical dependency. Signatures, Merkle roots, chains, and causal scores are only meaningful if the measurement process is actually isolated. The enclave is what provides that isolation. Every other guarantee in the protocol reduces to “trust the operator” without it. With it, the cryptographic guarantees become guarantees about something real.

This is why enclave-less deployments — or deployments using only a mock enclave — aren’t a slightly-weaker version of the protocol. They’re a fundamentally different thing. The protocol still runs, but the claims it enforces have different semantics. In a real-enclave deployment, an attestation is evidence. In a mock-enclave deployment, an attestation is testimony dressed up in cryptography.

What the enclave doesn’t do

Clarifying the scope helps prevent the word “enclave” from being expected to do more than it actually does.

The enclave doesn’t decide what to measure. The probe set and thresholds come from governance — not from the enclave. The enclave runs whatever probes governance has placed inside it.

The enclave doesn’t prove the model is “good.” It only proves that the attestation is an honest report of what the probes read on this specific model. Whether what the probes read is acceptable is a governance question.

The enclave doesn’t defend against all attacks. Side-channel attacks on TEEs are a real area of research. The enclave raises the cost of tampering dramatically, but it’s not unbreakable. Governance should factor that into threshold-setting and audit cadence.

The enclave doesn’t replace governance. It’s a technical component. The people running the governance still decide what to enforce, what thresholds apply, and what to do when something looks wrong.

An honest statement of residual trust

You have to trust the hardware manufacturer. Intel, AMD, NVIDIA — the security of the enclave depends on them not having shipped a backdoor.

You have to trust the enclave code. What runs inside is just code, and code has bugs. Audits and reproducible builds help but don’t eliminate this.

You have to trust that your threat model matches the enclave’s threat model. TEEs are strong against privileged software attackers; they’re weaker against physical attackers with unlimited time and a cryo-stripped chip.

None of this makes the enclave worthless — it still shifts the trust root from “the operator of this specific AI” to “the ecosystem of hardware, code, and physical security,” which is a much healthier place to put it. But it’s important not to sell enclaves as magical. They’re a significantly-harder-to-compromise foundation. That’s already a lot.

The point

The exchange protocol is the point where every other layer in the stack finally comes together. Measurement from the mathematics series. Structural boundaries from the governance series. Attestations and chains from the earlier protocol posts. All of it converges here, in a handshake that either succeeds cleanly or fails with an auditable reason.

Two agents who don’t know each other can reach a verified, governance-enforced agreement to cooperate. Or an auditable refusal not to. Those are the two outcomes, and they’re the outcomes governance actually needs.

The enclave is what takes the entire Geometry of Trust protocol from “self-report plus signatures” to “verifiable evidence.”

Signatures prove who signed. Merkle roots prove which model. Chains prove history. Causal scores prove mechanism realness. Every one of those guarantees is only as strong as the isolation of the process that produced them. The enclave provides that isolation.

Links:

📄 Geometry of Trust Paper

💻 Lecture Playlist

📄 Lecture Notes

💻 Open-source Rust implementation

🏢 Synoptic Group CIC, Hull, UK